Unconfigured Nodes can easily be upgraded

Following on from the Foundation Central introduction, the nodes should be running Foundation 4.5.1+ as minimum. There could be a scenario where the Foundation version in the CVM is older, given that the factories ship software that may be a few months behind the latest versions.

How can you get Foundation on the nodes up to a newer version before imaging?

Fortunately, upgrading is easy via the API which can be accessed via the CLI or a browser and I’ll cover both methods below.

Part 1: The CVMs have Internet access

CLI Method with Internet Access

If the CVMs have internet access the latest available update on the Nutanix Portal will be auto installed when the API is called. Here we are starting with a node with Foundation 4.4.3 installed and we want to update it to the latest available (4.5.3 at the time of writing).

To determine the current version on the node: nutanix@NTNX-B-CVM:~$ cat foundation/foundation_versionFoundation-4.4.3-abc86afd

Or use the API to get the current version: nutanix@NTNX-B-CVM:~$ curl -X GET --header "Accept: application/json" "http://172.16.9.57:8000/foundation/version" ; echo ""4.4.3

Execute the API call to upgrade Foundation:

nutanix@NTNX-B-CVM:~$ curl -X GET --header "Accept: application/json" "http://172.16.9.57:8000/foundation/auto_update_foundation"

That’s all there is to it. Now just wait ~2 to 15 minutes depending on your connection speed to the Nutanix Portal for the binary (~1.6GB) to download and install, then verify:

nutanix@NTNX-B-CVM:~$cat foundation/foundation_versionfoundation-4.5.3-815d6c96

Done!

GUI Method with Internet Access

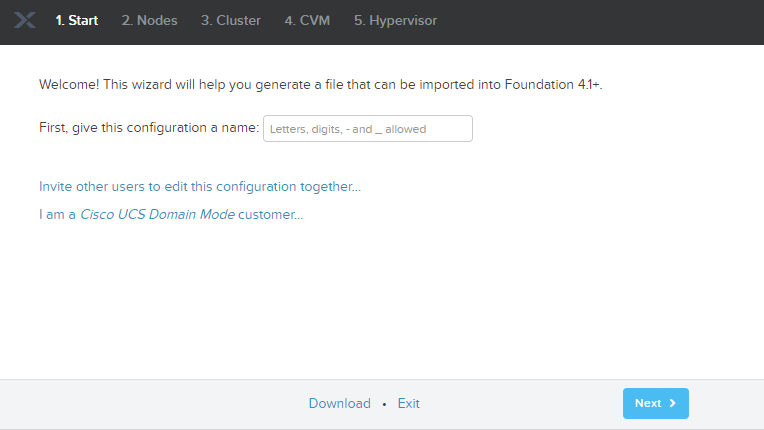

- Navigate to a CVM via

http://your-cvm-ip:8000/docs

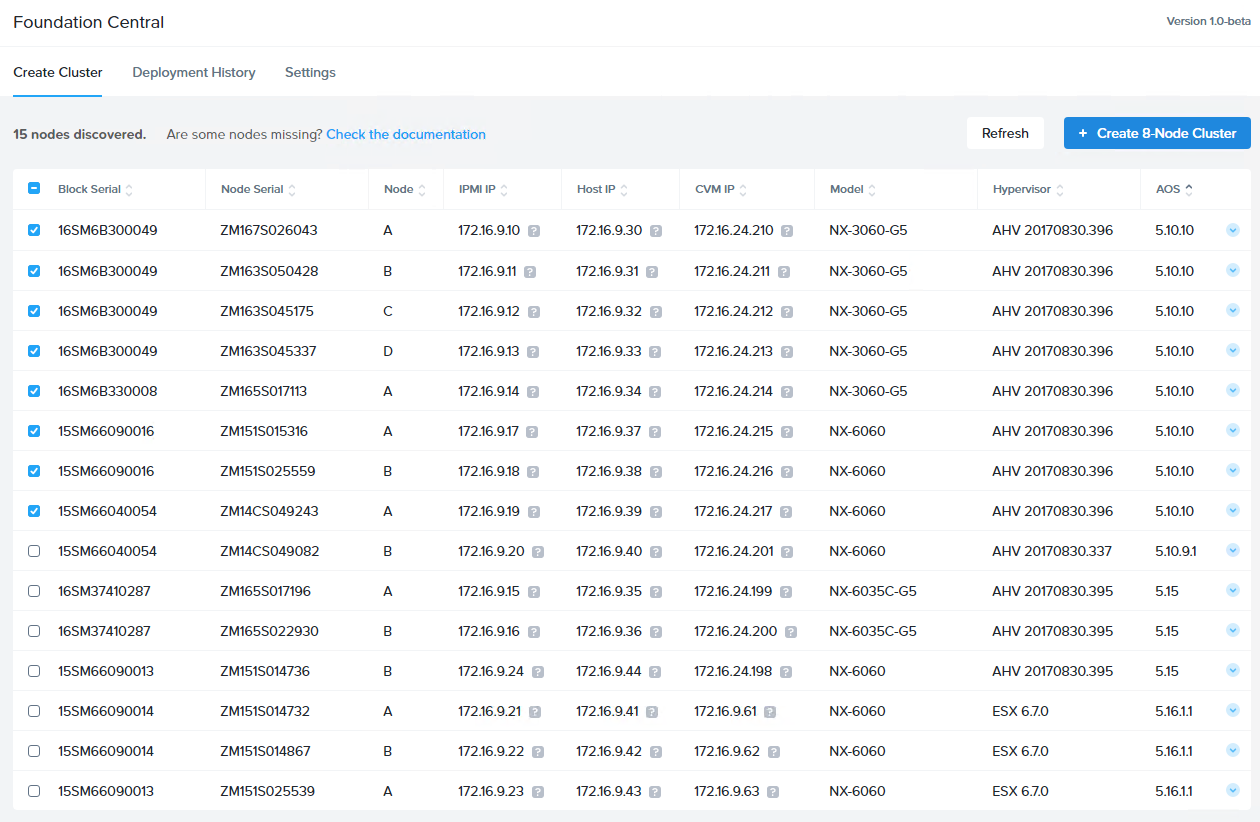

If a node (unconfigured) has DHCP and has registered with Foundation Central you can determine the IP address of the CVMs from the Foundation Central home page - You can check if an Foundation update for the CVM is available using this API : /is_update_available

Find that on the API Explorer page, expand it and click “Try it out!” button - Verify you get Response Code 200 after being patient (~10 to 30 seconds)

- If a Foundation upgrade is available, you can now upgrade. Upgrade the CVMs Foundation to the latest version using this API : /auto_update_foundation and again expand it and click “Try it out!”

Same as before it can take several minutes depending on your connection to the Nutanix Portal. Verify you get a Response Code 200.

If the CVMs have internet access, you don’t need to provide any parameters to these APIs, all you have to do is to hit “Try it out!” on that screen. Find the /version API on the Explorer page after the upgrade to verify the latest version is now on the node.

Done!

Part 2: If the CVMs have no direct Internet access

If the nodes cannot contact the Nutanix Portal directly, you will need the foundation upgrade binary from the Nutanix Portal and apply the update manually.

For example, upgrading from v4.4.3 to v4.5.3 using a manual file:

- Get the “Foundation Upgrade for CVM or Standalone Foundation VM” file from the Nutanix Portal (eg. foundation-4.5.3.tar.gz)

- Create a directory on the CVM you want to be upgraded : /home/nutanix/foundation_updates

- Copy the foundation-<version>.tar.gz file to this location

CLI method via manual update file

Now that we have the binary uploaded, we can initiate the upgrade.

nutanix@NTNX-B-CVM:~$ cat foundation/foundation_versionfoundation-4.4.3-abc86afd

nutanix@NTNX-B-CVM:~$$ ls ~/foundation_updates/foundation-4.5.3.tar.gz

nutanix@NTNX-B-CVM:~$ curl -X GET --header "Accept: application/json" "http://172.16.9.58:8000/foundation/auto_update_foundation?tar_file=foundation-4.5.3.tar.gz"

<wait ~1-2 minutes>nutanix@NTNX-B-CVM:~$cat foundation/foundation_version

foundation-4.5.3-815d6c96

Once complete, remember to delete the file you uploaded to /home/nutanix/foundation_updates to conserve CVM space.

Done !

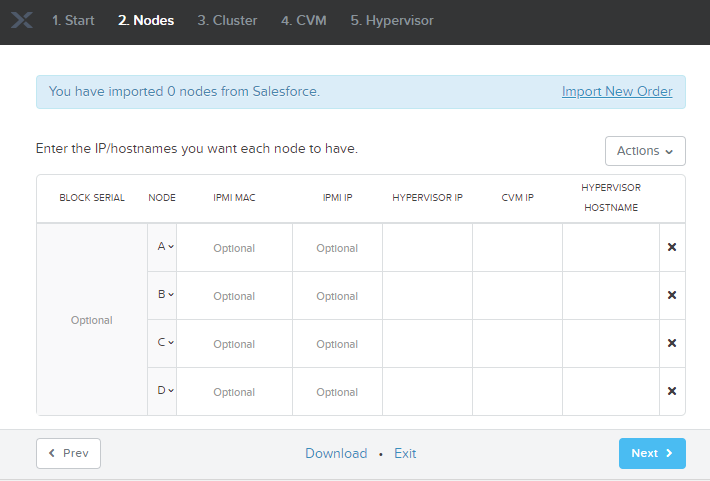

GUI method via manual update file

- Navigate to the CVM via

http://your-cvm-ip:8000/docsand the API Explorer will appear. Expand the /auto_update_foundation section. - Make the API call via the /auto_update_foundation and add the filename uploaded and click “Try it out!”.

- Foundation will be upgraded after a few minutes. Verify by either querying the API /version or via the CVM: ‘cat ~foundation/foundation_version’

- Done!

Once complete, remember to delete the file you uploaded to /home/nutanix/foundation_updates to conserve CVM space.

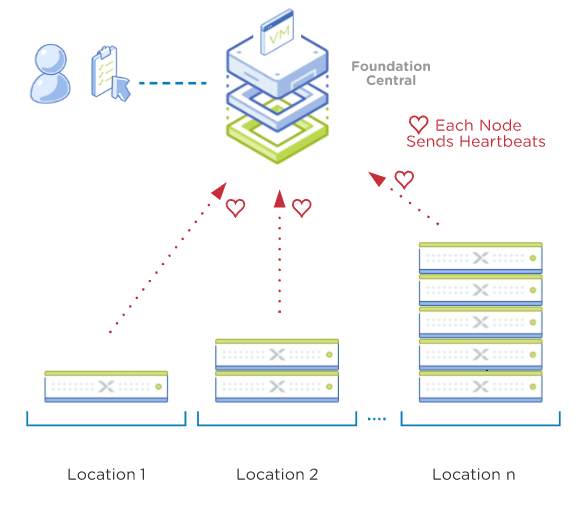

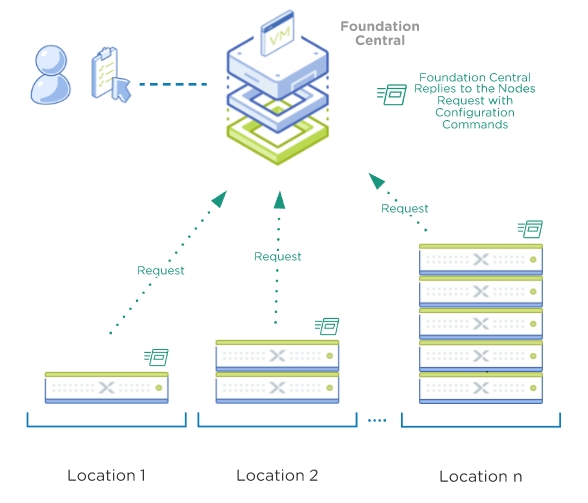

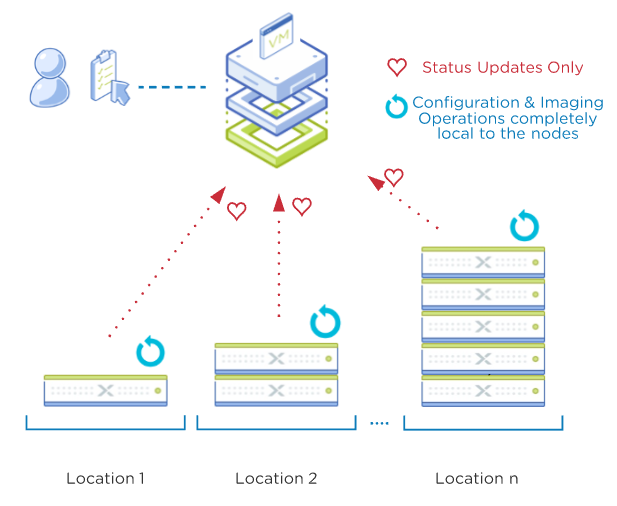

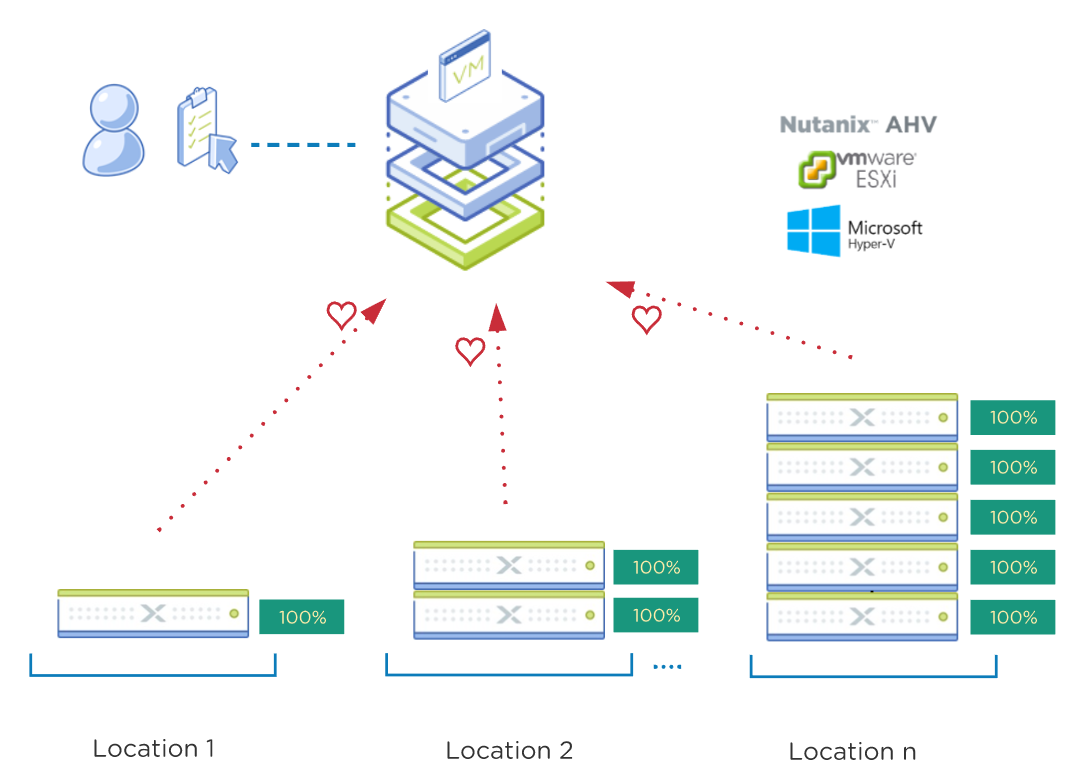

We plan to incorporate upgrading CVM Foundation on nodes detected by Foundation Central soon, so you can centrally update all your nodes easily.